Please read this before understanding the context of this post.

They are asking silly questions, that need to be nullified.

1. Unemployment. What happens after the end of jobs?

It has been established very strongly that weak minds fear technological advancement. If you are not ready to upgrade yourself, you become stagnant in your current work. So overruled! We should have one objective: advancement in technology should not be subject to professions unless it in unethical. Ask the truck drivers, about the ease of remote car locking( I am sure they are using it). Why did they not protest for locksmiths? Its a statement to create unnecessary tensions.

2. Inequality. How do we distribute the wealth created by machines?

Why? If you stumble across a pot of gold in AI for by providing some solution, you choose how you spend the money you own. Did anyone ask how to distribute money <some-business-tycoon> earned from their business? Such events occur and machines do not own wealth, there’s no inequality.What’s this question for?

3. Humanity. How do machines affect our behaviour and interaction?

We might need to start training everyone on this front. Already we(atleast I) find myself struggling in my behaviors and interactions with fellow humans, another human like AI, with no heart and consciousness, well you are only going to make things complicated. Hence the need for training. Related post here.

4. Artificial stupidity. How can we guard against mistakes?

Remember what Einstein said about Stupidity? Well we should solve/address things that are solvable and not something unpredictable. Can we program stupidity? I think we are still far from it.(stupid != foolish)

5. Racist robots. How do we eliminate AI bias?

Why do we put racist behavior inside AI first? Fix the root, you get a better fruit. See #7 resolution for more on this.

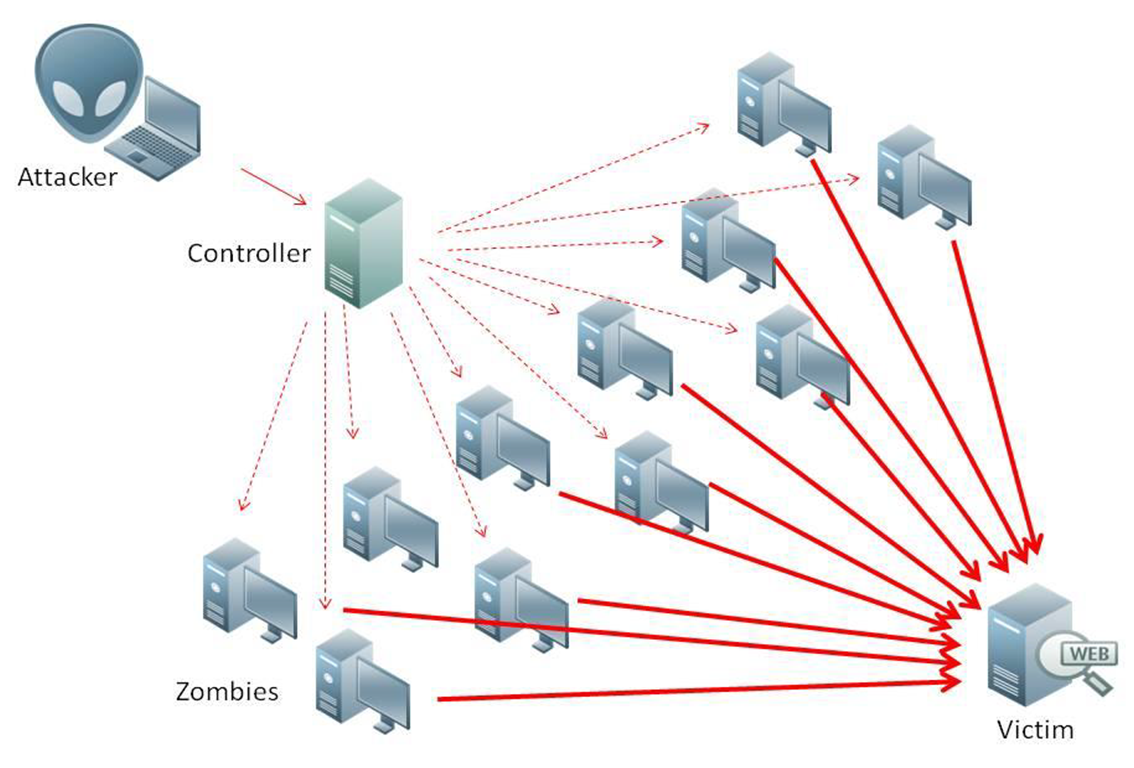

6. Security. How do we keep AI safe from adversaries?

Propaganda. Read a resolution for it in my earlier post here.

7. Evil genies. How do we protect against unintended consequences?

Simply have an insurance policy for AI: Any person/entity who produces an AI product, will be held responsible for any consequences coming from it. And that ownership will be lifetime. Not conditional or not lease based. I do not know why have we forgotten Newton’s 3rd law!

8. Singularity. How do we stay in control of a complex intelligent system?

Two examples come to mind: Alphabet and UN. One is perfectly managed complex system(Alphabet) and the other is a hopeless mess of crap(UN). Learn from Alphabet. I doubt if there is any other convincing organization really working for us humans, genuinely.

9. Robot rights. How do we define the humane treatment of AI?

I am surprised this question is being raised by heartless minds. Rights are for entities that have life(insects,plants,animals,humans qualify). Have we arrived at a state where human rights are protected on whole planet forget about the others? Unless that state is not arrived at, it is unfair to ask for something called robot rights. Did we ask for TV’s rights and camera’s rights and toasters rights? Referencing this post here again.

Hope this takes the thought process in a better direction.